Making build pain visible

03 November 2009

Posted in Visualisation

The practice of continuous integration is gaining widespread adoption and almost every project I was involved in over the past few years used a continuous integration server to maintain an up-to-date view on the status of the build. Developers can look at the status page of the server or use tools such as CCTray and CCMenu to find out whether a recent check-in has broken the build. Some teams also use build lights, like these for example, or other information radiators to make the status of the build visible.

The reason why developers need an up-to-date build status is a common, and good, practice: new check-ins are only allowed when the build is known to be good. If it is broken chances are that someone is trying to fix it and dumping a whole new set of changes onto them would undoubtedly make that task harder. Similarly, when the server is building nobody knows for sure whether the build will succeed, and checking in changes would make fixing the build harder, should it fail.

To recap: the build must be good for a developer to be able to check in. On one of our projects this was becoming a rare occurrence, though. In fairness, the build performed fairly comprehensive checks in a complex integration environment, involving an ESB and an SSO solution. The team had already relegated some long-running tests to a different build stage, and they had split the short build, ie. the build that determines whether check ins are allowed, into five parallel builds, bringing build time down from over 45 to under ten minutes. Still, developers often found themselves waiting in a queue, maintained with post-its on a wall, for a chance to check in their changes. Not only that but everybody felt the situation was getting worse, that the build was broken more often. This was obviously a huge waste and I was keen to make it visible to management using a visualisation.

Buildlines visualisation

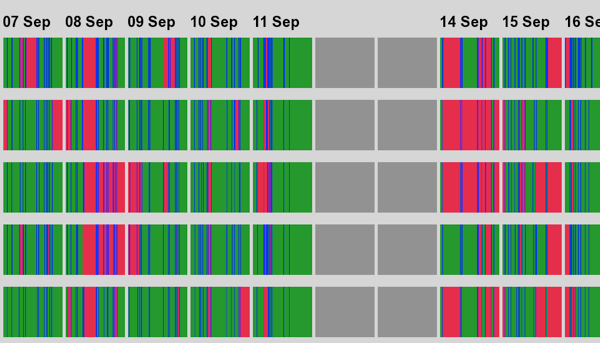

After some experimentation I decided on a variation of spark lines for the build status. Each of the builds gets its own line and the colour shows the status of the build at any given point in time, green for good, red for broken, and blue for building. I blanked out the weekends and stretched the time during the days so that only the hours between 8am and 7pm are visible. The resulting visualisation looked like this: (Click through for the full-size version.)

The zoomed out version clearly shows that matters were getting worse. The first two weeks in this diagram only show a few broken builds that, with the exception of one episode on build number 4 on Aug 11/12, get fixed relatively quickly. Looking at the last week and a half the picture changes quite dramatically:

On Monday, Sep 7, build 1 is broken for a while, which can happen. Later in the day build 2 breaks and is only fixed early on Tuesday. Again, this alone would not concern me, and while it is generally not such a great idea to leave a broken build behind, sometimes it is important to go home and get some rest.

At this point I should probably clarify that for a developer to be able to check in all the builds in this diagram need to be green. Each test in each build of this stage is significant. Long running, brittle tests, which include end-to-end integration tests with a mainframe system, have been moved to a different build stage and are treated separately.

A real reason for concern is that that around noon on Sep 8, all builds break at the same time. This is unlikely to be the result of a code change, because for a single change to break all builds it would have had to affect at least one test in each of the builds. Possible but not likely. A more reasonable explanation for this failure is a problem with the environment, maybe a database that is not responding or an SSO server that cannot authenticate the test users any longer. Similar problems can be seen Sep 14 during the morning, and Sep 15 in the afternoon. They are not completely new, either, as looking at Aug 28 reveals.

Environment problems like these can be extremely frustrating for a development team because a build that is broken not because of a code change but because of a problem with the environment leaves the developers in an awkward position. They can either wait until the environment is fixed, but that often relies on a separate team that may have different priorities, or they can continue to check in based on the assumption that the build isn't really broken. The latter is, of course, playing with fire as the team now effectively works without continuous integration.

Visualisations such as this one can help management get clarity on environment problems, and hopefully support a case for improving the build environment.

The buildines script

Unlike the visualisations I wrote about on this blog so far, this time I needed to write a bit more code as I could not find a tool to draw the spark lines for me. The data acquisition step was easy, though, because the team was using the Cruise continuous integration server, which allowed me to get information about past builds through a web-based API. I used the cURL command, which, by the way, is available for Windows, too.

curl -o Shortbuild1.csv "http://servername:8153/cruise/properties/search?pipelineName=WebFrontEnd-Dev&stageName=Shortbuild&jobName=Shortbuild1&limitCount=1000"

curl -o Shortbuild2.csv "http://servername:8153/cruise/properties/search?pipelineName=WebFrontEnd-Dev&stageName=Shortbuild&jobName=Shortbuild2&limitCount=1000"

...

Normally I'm advocating to split the data processing stage from the actual visualisation but in this case, because I was going to write the visualisation from scratch and the data coming from Cruise was in a pretty good format already, I decided to put everything into a single script. For that I chose Ruby and RMagick. The latter can be a pain to set up but there are installers for Windows, on the Mac it can be installed using MacPorts, and presumably the Linux package management systems include it, too.

The script is available from this Bitbucket repository. It is relatively straight-forward and I am not going to examine it in great detail in this post. What is noteworthy is that, internally, the script shows a separation of concerns, with one class reading the Cruise files, a second class scaling and drawing the lines and labels, and a main class that holds everything together. If you were to adapt this to a different continuous integration server it should be possible to achieve this by simply writing a different DataFile class.

To run the script simply pass the list of build status files as command line parameters to it:

ruby buildlines.rb Shortbuild*.csv

The output will be written into a file named buildlines.png in the working directory. If you prefer a different format you can change the extension of the filename in the script, and RMagick, provided it supports the format, will magically write the corresponding format.

The visualisation script can be downloaded from Bitbucket. Simply select one of the zipped versions of the "tip" snapshot.